Machine learning algorithms have become increasingly important in the field of data science, providing powerful tools for analyzing and establishing relationships between large amounts of data. Among many others, two techniques that have gained the most significant attention are Boosting and Bagging. While both methods aim to increase the accuracy of weak learners and transform them into strong learners, they differ quite much in their approaches, which impact how they are utilized.

So today in this article, we’ll delve into the specifics of these two methods, highlight their differences through real-life examples, and explain why boosting stands out as the superior option in most cases.

What is Boosting?

Boosting is a popular machine learning algorithm that trains datasets iteratively. Its objective is to enhance weak learners and transform them into strong learners. Boosting works by assigning more weight to the errors of the previous model to ensure that the next model does not repeat the same mistakes. Popular boosting algorithms being used in the industry include AdaBoost, CatBoost, and XGBoost.

To understand the mechanism of boosting, consider the facial recognition feature on your phone. This feature uses techniques like Ada Boost or Gradient Boost to train the model on various images, including those without faces. By assigning more significant weight to misclassified objects, the model learns to distinguish between what to detect and what not to detect.

What is Bagging?

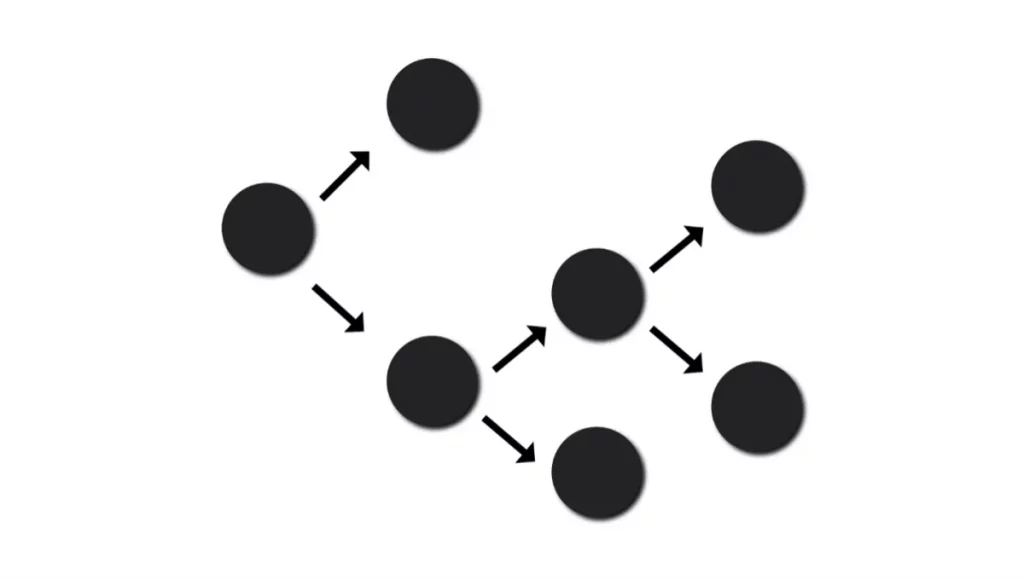

Bagging, short for Bootstrap Aggregating, is another widely used machine learning algorithm. It trains each learner on a different training dataset, using techniques like random sampling with replacements, training each model simultaneously and in parallel to reduce the variance in results. Finally, each learner makes specific predictions, and the final (strong) learner is made by either a voting scheme or by averaging all the results from the previous learners.

Speech recognition models are a great example of bagging algorithms. Since variance is an important factor to consider in these models, they’re trained on different audio datasets and recording words in different accents and perspectives. The predictions from each model are averaged to create a final strong learner for better accuracy.

-

Types Of Boosting Algorithms (Which technique is the best for you)

-

When Should I Use Ensemble Methods?

-

What is Ensemble Learning? Advantages and Disadvantages of Different Ensemble Models

Bagging Vs. Boosting – What’s the Difference?

You may be wondering which of these two techniques you should employ while building a model for your specific case, and whether the main aim of both techniques is the same. While there are surely applications for which both methods work pretty much the same way, but there are some areas where they differ a lot.

Bagging involves creating multiple independent models using subsets of the training data and then averaging their predictions to make a final prediction. Boosting, on the other hand, involves creating a sequence of models where each model tries to correct the errors of the previous model. Boosting typically performs better than bagging on complex datasets with high variance, while bagging is more effective on datasets with high bias.

Let’s explore the similarities and differences between both methods in detail so you can understand which technique you should pick depending upon your situation.

Similarities:

- Diverse: Both models encourage diversity by training multiple models on various datasets, i.e., boosting increases diversity by focusing on what not to do, while bagging increases diversity by creating multiple subsets in very training datasets.

- Flexible: It is easy to use both algorithms with different machine learning techniques, making them scalable and flexible.

- Immune to noisy data: Both models produce very robust learners because of the increased diversity in their training models because it results from the consensus of multiple models instead of one, therefore making it immune to outliers and noise in the data.

- Reduction in errors: Both focus on reducing the error rates in their final results by decreasing the variance.

Differences:

- Bias-Variance Tradeoff: While the boosting algorithm focuses on reducing the bias levels of the results to avoid underfitting, the bagging algorithm reduces the variance in the results to prevent overfitting.

- Training: The training of each model differs from one another, i.e., boosting adopts an iterative process to test each model one by one, whereas bagging trains multiple models independently and simultaneously.

- Types of Models: Bagging uses complex models such as random forests and support vector machines to analyze high-dimensional data, but boosting uses weak models such as decision trees and neural networks with low variance and high bias to train the data quickly.

- Properties of datasets: Each method works differently depending on the datasets provided to the models. Boosting works better in imbalanced datasets, while bagging performs better with noisy datasets.

6 Reasons Why Boosting is Better in Most Cases?

As I’ve already gave a sneak peek that boosting often performs better than bagging in most real-life scenarios such as fraud detection, credit risk analysis, and medical diagnosis, here’s a more detailed explanation of why this is so:

- A better option in case of complex tasks: Boosting is a more effective option for complex tasks because it can detect complicated patterns in high-dimensional and non-linear datasets. For example, in natural language processing or computer vision tasks, boosting can detect patterns that bagging may miss.

- Accurate results even with fewer data: Boosting is a useful technique when working with scarce, expensive to collect, complex, or heterogeneous data. This is because it focuses on data quality rather than quantity. Boosting assigns weights to data points to prioritize the ones that are more informative, leading to more accurate predictions even with fewer data.

- Better at handling class imbalance: Boosting assigns more significant weightage to minority classes, making it better at handling imbalanced datasets. This is because boosting focuses on the errors of the previous model and tries to correct them, leading to more accurate predictions on minority classes. In contrast, bagging assigns equal weightage to each class, resulting in biased predictions on imbalanced datasets.

- Immunity against noise: Boosting is immune to noise because it assigns weights to clean data. It identifies outliers and assigns lower weights to them, making the model more robust to noisy data. In contrast, bagging results can be significantly affected by noise since it makes subsets from the same training dataset, leading to biased predictions.

- Easy to interpret: Since boosting algorithm uses simple models such as decision trees, it makes analyzing the results more manageable, which is especially important in cases such as fraud detection as you need to explain the predictions being made.

- More efficient: Boosting is more efficient than bagging because it trains smaller datasets in an iterative manner. This means that it requires less computational resources compared to bagging, which works with multiple individual models and hence needs more resources to analyze the data.

Wrap Up

Bagging and boosting are two most popular ensemble methods used in the industry. They’re both very handy when it comes to a wide range of applications, with boosting sometimes being the more suitable approach. Both techniques have different problem-solving approaches, but both prove to be very effective in their own areas.

At the end of the day, the final decision will depend on the requirements of the problem, its complexity, and the accuracy required. Therefore, it is better to understand the strengths and limitations of each technique and study its mechanism before deciding on one.